"Put all your eggs in one basket and then watch that basket."

--Mark Twain, Pudd'nhead Wilson

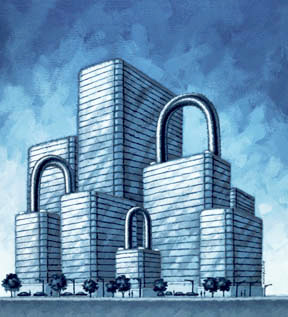

Most cyber attacks and breaches are not manifested as bad actors storming the data center or network perimeter. Threats typically move from the data center out, whether as malware or an insider undertaking some form of exfiltration. Indeed, today's network perimeter is increasingly not a single physical or virtual place, yet much of the industry debate is still focused on the perimeter.

For enterprise IT and security professionals, this means they must take a different InfoSec perspective. Here are 7 considerations every security manager must keep in mind going forward.

1. Secure the Right Boundary. The evolution of dynamic, multi-tenant cloud architectures and the reality of distributed computing stacks have effectively destroyed the foundational elements of perimeter security. If you only focus on the edge of the data center, you are ignoring the greatest amount of attack surface—the inter-workload communications that never transverse the perimeter. Security leaders must focus on the intra- and inter-data center architecture challenges posed by new software and cloud computing infrastructures.

2. Security Should Be Part of the DevOps Cycle. Security must be built in to applications, not bolted on after. The enterprise increasingly relies on agile software development, but the lack of corresponding fast-moving security approaches effectively increases the risk of a breach. You cannot build and mount applications in a distributed application and computing environment and then rely on a static, hierarchical security model built upon chokepoints, infrastructure control points, and organizational silos.

Reducing Attack Surface3. Ports Still Matter. If you leave the front door open, will you be surprised if someone walks in? How well are you keeping an eye on port controls within workloads in your data center or moved to public clouds? Some pretty big breaches have happened because a development server was open to the Internet. Adopting white-list models reduces the attack surface.

4. Reduce the Complexity. Corresponding to the port issue, today most enterprises suffer from "policy debt," because they have stacked incremental controls such as firewall rules on their perimeter security. (Why doesn't anyone decommission extinct rules?) Last year I met with a security team from a large enterprise who told me they had 2.5 million firewall rules. (Yes, you read that right.) I asked if that number made them feel more in control. They said no, it made them feel less secure since they grasp less of their situation. Policy debt drives up cost and complexity and leaves open avenues for potential infiltration and exfiltration.

5. Encrypting Critical Data. The traditional approach to encrypting inter-workload traffic was to string a VLAN from a workload to a firewall and then create an encrypted tunnel to another firewall (and then reversing the process). This has been time consuming in traditional data center environments and nearly impossible in heterogeneous cloud environments such as the big cloud providers that are inherently multi-tenant environments. This must change.

6. Monitoring and Alert in Real Time. Visibility and monitoring are critical to keeping a secure cloud and data center architecture. Moreover, anomalies and policy violations must be flagged in real time. Real-time monitoring and policy alerting can reduce the time to infection for other machines from months to minutes and reduce the collateral damage on the path to remediation.

7. Don't Choose Between Network and Host. I have a friend who is a foreign policy reporter. When asked why he travels so much, he responds: "If you don't go, you don't know." Because vendors traditionally can only track the security on the host or the network, they advise on the benefits or perils of a counter approach. Today, the only way to survive the increasingly sophisticated attack environment is to understand the context of both the computing (think actual processes and roles) and the network (which tracks the flows).

Ultimately, security must be orchestrated and automated to meet the complex computing world we have moved into and reduce the overhead thrown at people. And it must scale to meet the challenges presented by distributed computing. This requires new considerations and the ending of a few "truths" of the prior era of information security.

According to a report in the Financial Times, Google are phasing out the use of Microsoft's Windows within the company because of security concerns. Citing several Google employees, the FT report reports that new hires are offered the option of using Apple Mac systems or PCs running Linux. The move is believed to be related to a directive issued after Google's Chinese operations were attacked in January. In that attack, Chinese hackers took advantage of vulnerabilities in Internet Explorer on a Windows PC used by a Google employee and from there gained deeper access to Google's single sign on service.

According to a report in the Financial Times, Google are phasing out the use of Microsoft's Windows within the company because of security concerns. Citing several Google employees, the FT report reports that new hires are offered the option of using Apple Mac systems or PCs running Linux. The move is believed to be related to a directive issued after Google's Chinese operations were attacked in January. In that attack, Chinese hackers took advantage of vulnerabilities in Internet Explorer on a Windows PC used by a Google employee and from there gained deeper access to Google's single sign on service.

In the Career Compass program, the initial exercise focuses on identifying and distilling five key elements that differentiate the participant from others.

In the Career Compass program, the initial exercise focuses on identifying and distilling five key elements that differentiate the participant from others.

On the face of it, the field of information security appears to be a mature, well-defined, and an accomplished branch of computer science.

On the face of it, the field of information security appears to be a mature, well-defined, and an accomplished branch of computer science.